The Silent Toll of AI Safety

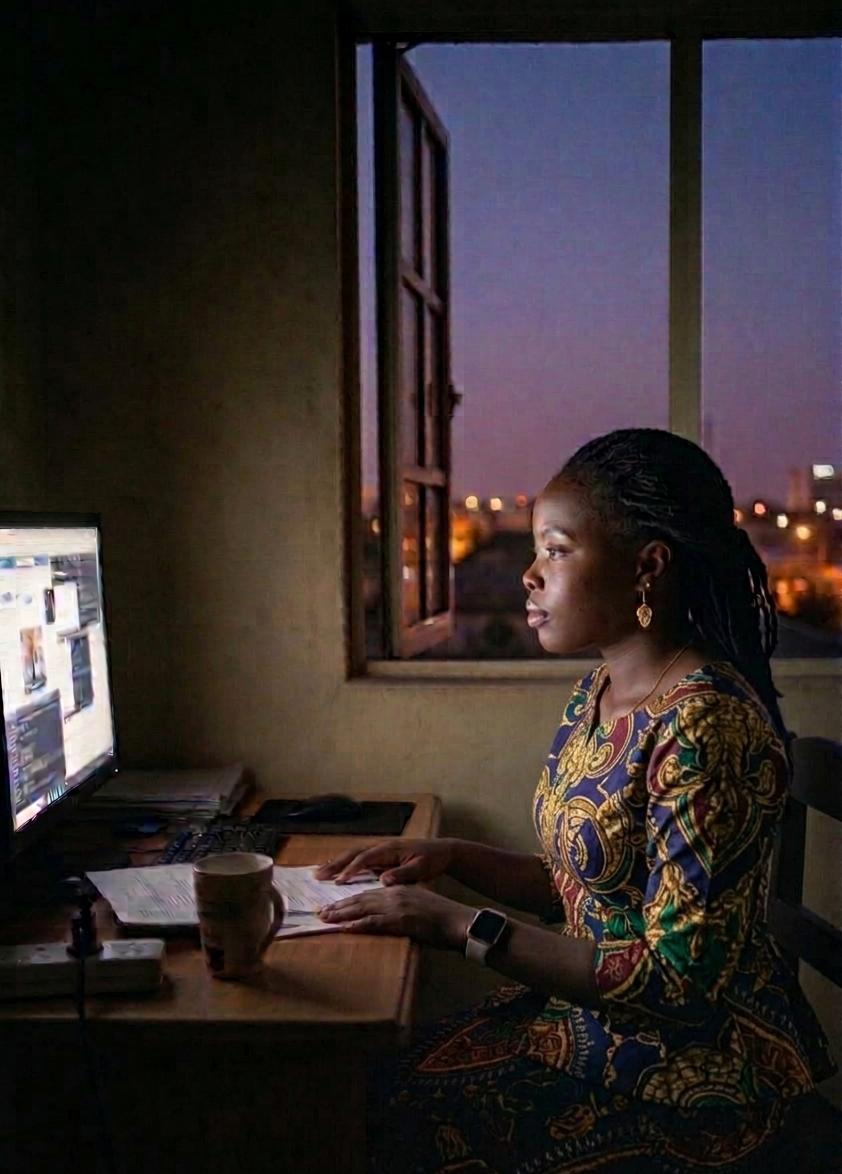

Drawing on her own experience as a content moderator for OpenAI and Facebook, Kauna Malgwi writes with emotional urgency and ethical clarity about the hidden psychological toll that AI safety work exacts on African tech workers. She makes a deeply felt case for not only recognition and research, but for structural change through cooperative ownership, real mental health protections, and meaningful legal accountability.

—————————————————————————————————————————–

Algorithmic Trauma and the African Frontier

Every day, people like me decide what the world should not see. We remove violence before it trends and filter hate before it spreads, absorbing trauma so platforms can remain “safe.” Yet the psychological impact on those of us who clean the internet is largely ignored. While we celebrate the web’s connectivity, no one asks: What happens to the people who bear this digital burden?

I did not arrive at this work by accident. I was born and raised in Maiduguri, Borno State, in northeastern Nigeria, a place where life, at one point, became defined by instability and fear. The Boko Haram insurgency, which declares that “education is forbidden,” tore through every foundation of life: home, schooling, and the very possibility of a predictable future. My family and I eventually had to leave, forced to rebuild our lives from scratch in the capital city. That rupture, the experience of losing a world and having to construct a new one, shaped how I understand trauma. I learned early on that trauma is not just a singular event; it is a force that reorganizes an individual’s identity, their sense of safety, and their feeling of belonging.

I initially set out to become a medical doctor, focusing on the body’s ability to heal. But over time, my path shifted toward clinical psychology. I became far more interested in psychological survival, how the mind adapts to environments that are fundamentally hostile. Years later, I found myself working as a content moderator for a major social media platform. That experience changed everything. It revealed a new frontier of human suffering, one that isn’t caused by a bomb or a physical displacement, but by a relentless stream of digital data.

The Work No One Sees: A Factory of Human Distress

Content moderation is often described by tech companies in sterile, technical terms. They speak of “reviewing posts,” “enforcing community guidelines,” and “maintaining platform integrity.” This language is sanitized. It hides the visceral reality of the job.

What moderators actually do is engage in continuous, high-volume exposure to human distress. Imagine spending eight hours a day, five days a week, watching graphic physical violence and war crimes, child sexual abuse material, self-harm and live-streamed tragedies, and extreme hate speech and dehumanizing propaganda.

This is not passive viewing. Unlike a casual user who might stumble upon a disturbing video and quickly scroll past, a moderator must lean in, watch the video to the end, and determine exactly where a policy is violated. They must categorize the violence and make decisions in seconds under strict productivity metrics. The digital system relies on their psychological endurance, yet treats them as fast, tireless, and replaceable.

From Exposure to Injury: The Anatomy of Vicarious Trauma

During my time in the industry, I began to see in my colleagues the very patterns my clinical training had taught me to recognize. We weren’t just “tired” or “stressed.” We were witnessing a quiet, collective erosion of the self. In clinical psychology, we call this Vicarious Trauma. It occurs when individuals are exposed to the traumatic stories and images of others, leading to a shift in their own worldview.

For moderators, this manifests in several distinct ways. Hyper-vigilance sets in first: when you spend your day looking for threats online, you begin to see them everywhere offline. A crowded mall is no longer a place to shop, but a site of potential violence. Emotional numbing follows. To survive the shift, the mind builds a wall. You stop feeling the horror of the images, but that wall has no door. In time, you also stop feeling joy, intimacy, or empathy in your personal life. Then come the intrusive thoughts. The images do not stay at the office. They return in dreams, during family dinners, or in quiet moments of reflection.

What makes this unique for moderators is the algorithmic mediation. Traditional trauma frameworks often focus on a single, shocking event. But content moderation is chronic. It is a slow-motion injury sustained through a screen, scaled by AI, and delivered at a frequency the human brain was never evolved to handle.

My ICDE Fellowship project, “From Silicon Scars to Solidarity: African Tech Workers and the Psychological Cost of Algorithmic Labor,” seeks to bring these experiences out of the shadows into the light of rigorous academic and social inquiry.

This research focuses specifically on African content moderators who form a critical but invisible layer of the global AI ecosystem. As tech giants face increasing scrutiny in the West, they have moved their “cleaning labor” to the Global South. African hubs in Kenya, Nigeria, and South Africa have become the engine rooms of global digital safety.

Why the African Context Matters

The experience of an African moderator is distinct from that of counterparts in Europe or North America because our workers operate at the intersection of several unique pressures.

One is economic precarity. In many African tech markets, these jobs are among the only high-paying white-collar opportunities available to young graduates. That creates a kind of golden handcuff effect, in which workers feel they cannot leave, even as their mental health deteriorates.

Another is the weight of history. Many African workers come from regions marked by their own histories of conflict and collective trauma. The content they moderate, often involving regional wars or local hate speech, can trigger re-traumatization, as digital images connect painfully with lived experience.

There is also stigma and silence. In many African cultures, mental health remains taboo. If I struggle with PTSD, I may not have the language to explain it to my family, and I may fear being labeled weak or even possessed if I seek professional help.

Reframing the Problem: Defining Algorithmic Trauma.

To capture the full scope of this crisis, my study uses a mixed-methods approach. Clinical assessment: We use standardized psychological tools to measure PTSD, burnout, and secondary traumatic stress. This provides the hard data needed to advocate for labor law changes. Qualitative narratives: We conduct deep-dive interviews. Data points tell us what is happening, but stories tell us how it feels. These interviews allow moderators to regain agency over their own narratives. Intersectional analysis: We examine how gender and cultural norms shape the experience. For instance, do male moderators feel more pressure to “tough it out”? How do female moderators navigate the specific trauma of sexual violence content?

Even at this stage, the findings are jarring. We are seeing a phenomenon I call “The Normalized Nightmare.” Moderators often describe horrific videos with the same tone one might use to describe a spreadsheet error. This detachment is a functional survival strategy for the eight-hour shift, but it is a clinical red flag for long-term psychological collapse. These findings highlight important shortcomings in current support systems.

We are also finding that the “wellness” programs provided by outsourcing companies are often inadequate. Many consist of a “wellness room” with a beanbag chair or a 15-minute session with a counselor who doesn’t understand the technical nature of the work. This is like putting a Band-Aid on a broken limb.

Re-imagining the System: The Gammayyar African Tech Workers Cooperatives

In response to these structural challenges, I have been directly involved in co-founding the Gammayyar African Tech Workers Cooperative, an initiative that seeks to fundamentally rethink how digital labor is organized. GTechCoop was not created as an abstract idea. It emerged from lived experience out of the recognition that the current outsourcing model systematically externalizes harm onto workers while concentrating power and profit elsewhere.

The cooperative model offers a different approach.

It centers worker ownership and governance, allowing those most affected by the work to shape its conditions; built-in mental health protections, treating psychological well-being as a core operational requirement rather than an afterthought; fairer compensation structures that reflect the intensity and risk of content moderation work; and collective agency, enabling workers to negotiate conditions from a position of strength rather than isolation.

In many ways, GTechCoop directly addresses the failures identified in this research. However, its success is not determined by internal design alone.

The broader digital labor economy presents real constraints: procurement systems prioritize low-cost vendors over ethical ones; worker-led initiatives often lack access to capital and market entry points; and regulatory frameworks rarely account for psychological harm in digital work.

This means that GTechCoop is not simply building an alternative; it is attempting to operate within and challenge a system that was not designed for it to succeed. So the question is not whether the cooperative model can work in theory. The question is whether there is sufficient structural support for it to work in practice. With the right investment, partnerships, and policy backing, GTechCoop has the potential to serve as a proof of concept for a more ethical digital labor economy, one where care, dignity, and sustainability are not optional, but foundational. Without that support, the risk is not that the idea fails, but that the system continues unchanged.

Why This Matters for the Global Ecosystem

This is not just a “labor issue” or a “mental health issue.” My main argument is that it is an ethical AI issue because the well-being of thousands of workers underpins the very digital safety the world relies on. If the foundation of our AI safety models is built on the psychological destruction of workers in the Global South, then that AI is not “safe”; it is exploitative.

When moderators are burnt out or traumatized, their decision-making accuracy drops. This leads to more hate speech slipping through the cracks or legitimate political speech being silenced. The moderator’s mental health is directly linked to the health of global digital discourse. If we care about the “safety” of our platforms, we must care about the safety of the people who maintain them.

A Call for Solidarity and Support

This project is not intended to live only in a Google Doc or an academic journal. It is a blueprint for change. To move from research to reform, I am looking for support from the ICDE community and the wider public.

I welcome collaboration and support in several areas. In terms of funding and resources, we need the financial capacity to scale our research, provide stipends to participants, who often risk their jobs by speaking out, and hire clinical researchers across African regions.

To make GTechCoop viable, we would need more than goodwill. We would need financial support to build the cooperative on stable footing, strategic partnerships that open access to clients and markets, and legal and policy support to make it easier to launch a cooperative in this sector.

I am also eager to connect with practitioners in other high-trauma fields, such as first responders, human rights investigators, and war correspondents, in order to compare coping mechanisms and institutional support models.

We also need policy and legal expertise to help translate our clinical findings into enforceable labor standards. How do we create duty-of-care laws that hold tech giants accountable for the psychological welfare of their outsourced workforce?

Finally, I am seeking partnerships with clinicians interested in developing specialized trauma-informed protocols specifically for digital first responders.

Our ultimate goal is to build a platform that provides peer support and professional resources for these workers, which you can learn more about at A Mental Health Intervention for Data Workers.

Toward a Human-Centered Digital Future

The internet does not clean itself. Every time you scroll through a feed that is free of gore or harassment, you are benefiting from the invisible labor of someone who had to see it so you didn’t have to. For too long, we have treated the psychological cost of the internet as an “externalization”, a side effect that can be ignored. But as the AI revolution accelerates, the demand for this “human-in-the-loop” labor will only grow. We cannot continue to build the future on a foundation of hidden harm.

This work is an attempt to make the invisible visible. It is a push for a digital economy that values human dignity as much as it values algorithmic efficiency. We are moving from individual suffering to structural accountability. Because the question is no longer whether someone must guard our digital spaces, but whether we will, in turn, safeguard those who stand watch over them.

About the author:

Kauna Malgwi is a 2026/2027 ICDE Fellow, as well as a Nigerian labor organizer, Ph.D. student, and leader in the emerging African Content Moderator Union.